News

[Blog] MistServer and Secure Reliable Transport (SRT)

What is Haivision SRT?

Secure Reliable Transport, or SRT for short, is a method to send stream data over unreliable network connections. Do note that was originally meant for between server delivery. The main advantage of SRT is that it allows you to push a playable stream over networks that otherwise would not work properly. However, keep in mind that for “perfect connections” it would just add unnecessary latency. So it is mostly something to use when you have to rely on public internet connections.

Requirements

- MistServer 3.0

Steps in this Article

- Understanding how SRT works

- How to use SRT as input

- How to use SRT as output

- How to use SRT over a single port

- Known issues

- Recommendations and best practices

1. Understanding how SRT works

SRT used to be done through a tool called srt-live-transmit. This executable would be able to take incoming pipes or files and send them out as SRT or listen for SRT connections to then provide the stream through standard out. We kept the usage within MistServer quite similar to this workflow, so if you have used this in the past you might recognize it.

Connections in SRT

To establish a SRT connection both sides need to find each other. For SRT that means that one SRT process is listening for incoming connections (Listener mode) and the other side will reach out to an address and call for the data (caller mode).

Listener mode

In SRT the listener means the side of the SRT connection that expects to receive the streaming data. By default in SRT this is the side that monitors a port and awaits a connection.

Caller mode

In SRT the caller means the side of the SRT connection that sends out the streaming data to the other point. By default in SRT this is the side that establishes the connection with the other side.

Rendezvous mode

In SRT Rendezvous mode is meant to adapt to the other side and take the opposite. If a rendezvous connection connects to a SRT listener process it’ll become a caller. While this sounds handy we recommend only using listener and caller mode. That way you’re always sure which side of the connection you are looking at.

Don’t confuse listener for an input or caller for an output

As you might have guessed from the defaults they do not have to apply in all cases. Many people confuse Listener for an input and Caller for an output. It is perfectly valid to have a SRT process listen to a port and send out streaming data to anyone that connects. That means that while it is listening, it is meant to be serving (outputting) data.

In most cases you will use the defaults for listener and caller, but it is important to know that they are not inputs or outputs. They only signify which side reaches out to the other and which side is waiting for someone to reach out.

Putting this to practise

The SRT scheme is as follows:

srt://[HOST]:PORT?parameter1¶meter2¶meter3&etc...

HOST This is optional. If you do not specify it 0.0.0.0, meaning all available network interfaces will be used

PORT This is required. This is the UDP port to use for the SRT connection.

parameter This is optional, these can be used to set specific settings within the SRT protocol.

You can assume the following when using SRT:

- Not specifying a host in the scheme will imply listener mode for the connection.

- specifying a host in the scheme will imply caller mode for the connection.

- You can always overwrite a mode by using the parameter

?mode=caller/listener. - Not setting a host will default the bind to: 0.0.0.0, which uses all all available network interfaces

Some examples

srt://123.456.789.123:9876

This establishes a SRT caller process reaching out to 123.456.789 on port 9876

srt://:9876

This establishes a SRT listener process monitoring UDP port 9876 using all available network interfaces

srt://123.456.789.123:9876?mode=listener

This establishes a SRT listener process using address 123.456.789.123 and UDP port 9876.

srt://:9876?mode=caller

This establishes a SRT caller process using UDP port 9876 on all available interfaces

2. How to use SRT as input

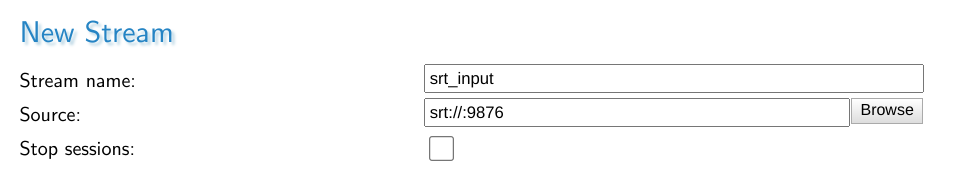

Both caller/listener inputs can be set up by creating a new stream through the stream panel.

SRT LISTENER INPUT

SRT listener input means the server starts an SRT process that monitors a port for incoming connections and expects to receive streaming data from the other side. You can set one up using the following syntax as a source:

srt://:port

The above starts a stream srt_input with a SRT process monitoring all available network interfaces using UDP port 9876. This means that any address that connects to your server could be used for the other side of the SRT connection. The connection will be succesfull once a SRT caller process connects on any of the addresses the server can be reached on, using UDP port 9876.

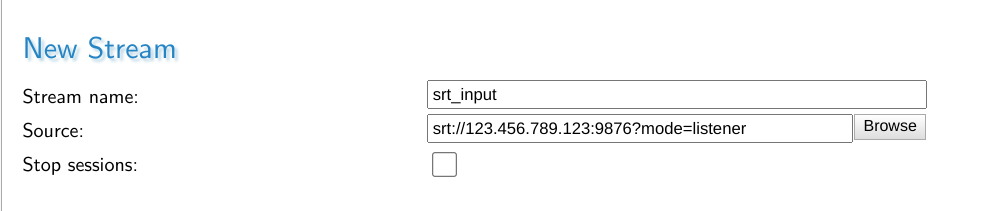

If you want to have SRT listen on a single address that is possible too, but you will need to add the ?mode=listener parameter.

srt://host:port?mode=listener

The above starts a stream srt_input with a SRT process monitoring the address 123.456.789.123 using UDP port 9876. The server must be able to use the given address here, otherwise it will not be able to start up the SRT process. The connection is succesfull once a SRT caller process connects on the given address and port.

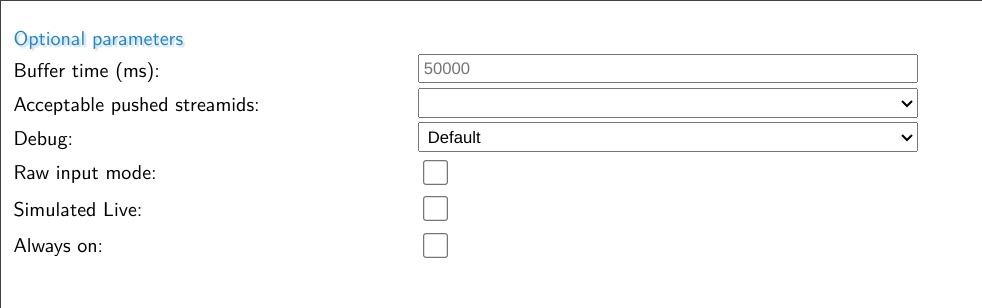

Important Optional Parameters

The optional parameters are avaialble right under the Stream name and Source fields.

| Parameter | Description |

|---|---|

| Buffer time (ms) | This is the live buffer within MistServer, it is the DVR window available for a live stream. |

| Acceptable pushed streamids | Here you can choose what happens if the ?streamid=name parameter is used if SRT connections matching this input are made. It can become an additional stream (wildcard), it can be ignored (streamid is not used, it is seen as a push towards this input instead or it can be refused.) |

| Debug | Set the amount of debug information |

| Raw input mode | If set the MPEG-TS information is passed on without any sort of parsing/detection by MistServer |

| Simulated live | If set the MistServer will not speed up the input in any way and play out the stream as if it’s coming in in real time |

| Always on | If set MistServer will continously try monitor for streams matching this input instead of only when a viewer attempts to watch the stream. |

The most important optional parameter is the Always on flag. If this is set MistServer will continously monitor the given input address for matching SRT connections. If this is not set MistServer will only attempt to monitor for matching SRT connections if for about 20 seconds after a viewer tried to connect.

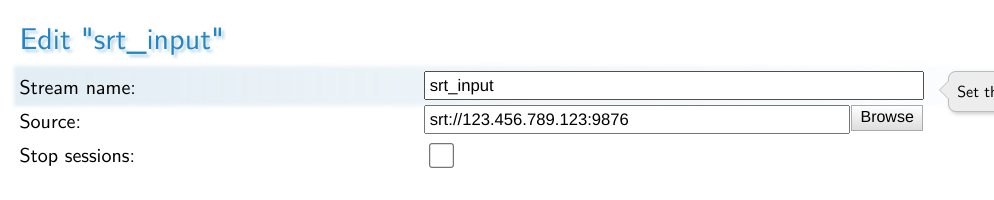

SRT CALLER INPUT

SRT Caller input means the server starts a SRT process that reaches out to another location in order to receive a stream.

srt://host:port

The above starts a SRT process that reaches out the address 123.456.789.123 using UDP port 9876. In order for the SRT connection to be successfull there needs to be a SRT listener process on the given location and port.

While it is technically possible to leave the host out of the scheme and go for a source like:

srt://:port?mode=caller

It is not recommended to use. The whole idea of being the caller side of the connection is that you specifically know where the other side of the connection is. If you need an input capable of being connected to by unknown addresses you should be using SRT Listener Input.

3. How to use SRT as output

SRT can be used as both a Listener output or a Caller output. A listener output means you wait for others to connect to you and then you send them the stream. Caller output means you send it towards a known location.

SRT LISTENER OUTPUT

There’s two methods within MistServer to set up a SRT listener output. You can set up a very specific one through the push panel or a generic one through the protocol panel.

The difference is that setting up the SRT output over the push panel allows you to use all SRT protocols, which is important if you want to use parameters such as ?passphrase=passphrase that enforce an encrytpion passphrase to match or the connection is cancelled.

Setting SRT through the protocol panel only allows you to set a protocol. Anyone connecting to that port will be able to request all streams within MistServer.

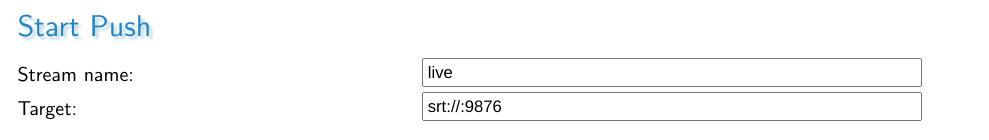

Push panel style

Setting up SRT LISTENER output through the push panel is perfect for setting up very specific SRT listener connections. It allows you to use all SRT parameters while setting it up.

up a push stream with target:

srt://:port?parameters

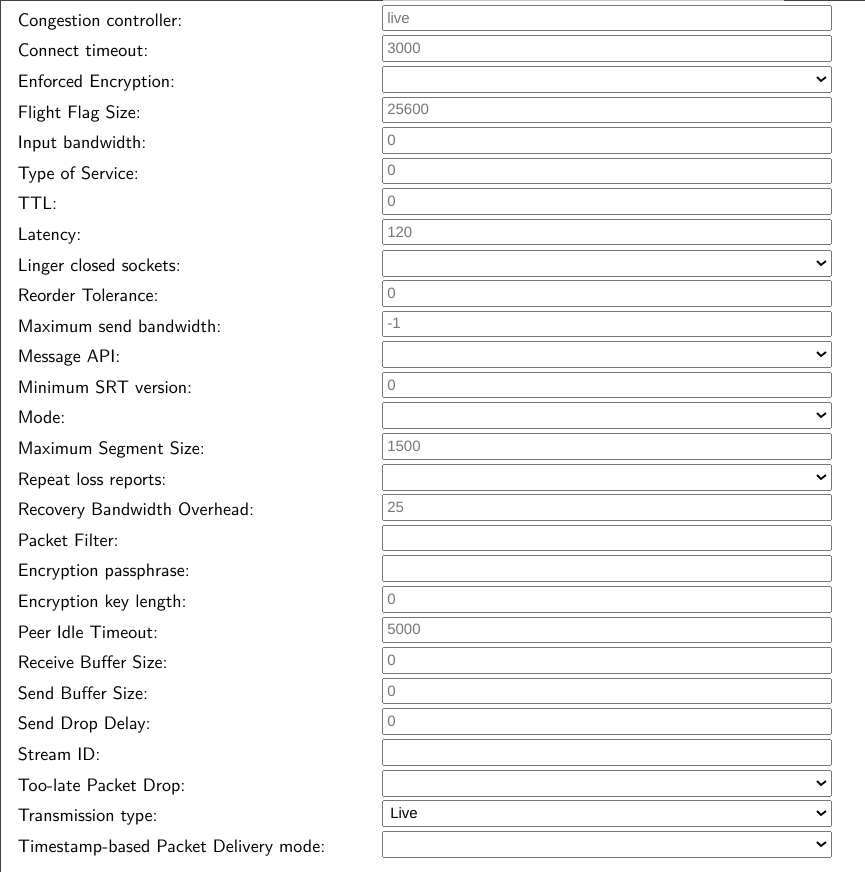

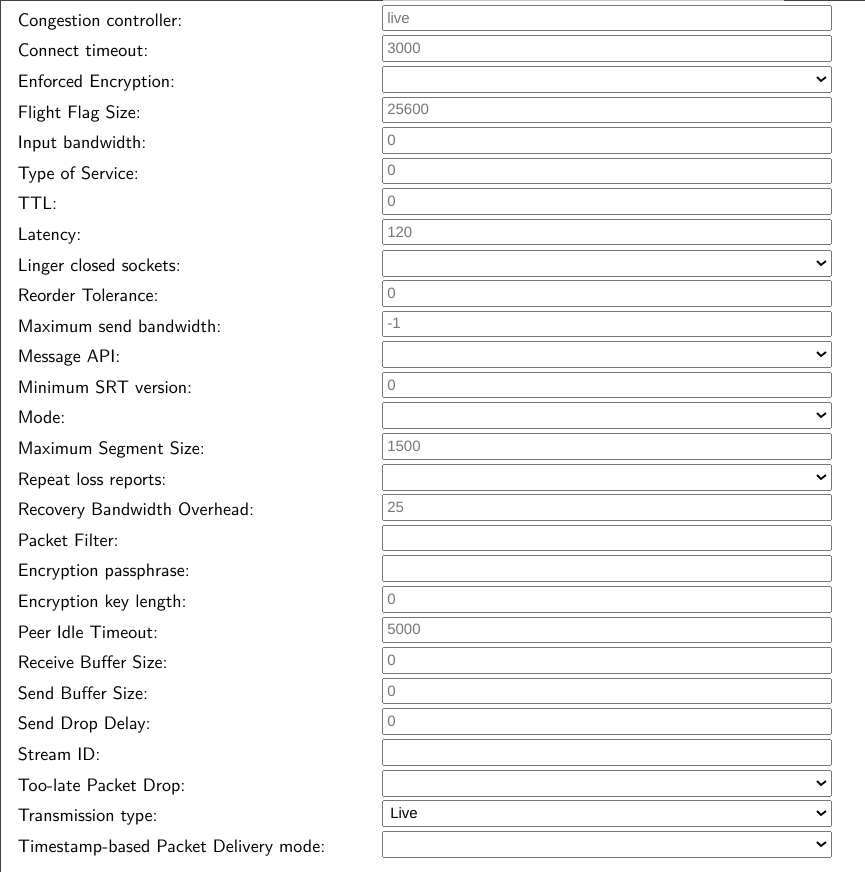

Once the SRT protocol is selected all SRT parameters become available at the bottom.

Using the SRT parameter fields here is the same as adding them as parameters. You could use this to set a uniquepassphrase for pulling SRT from your server, which will be output-only.

If you add a host to the SRT scheme make sure you set the mode to listener.

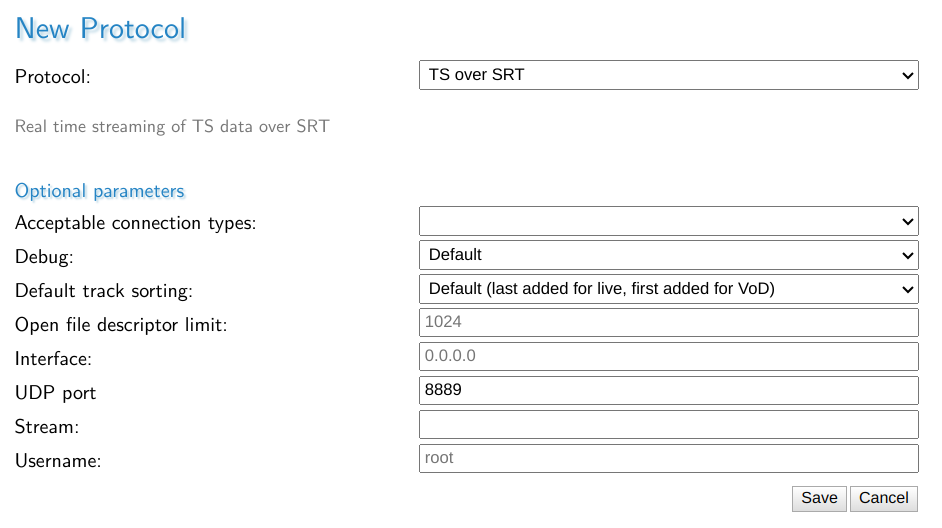

Protocol Panel Style

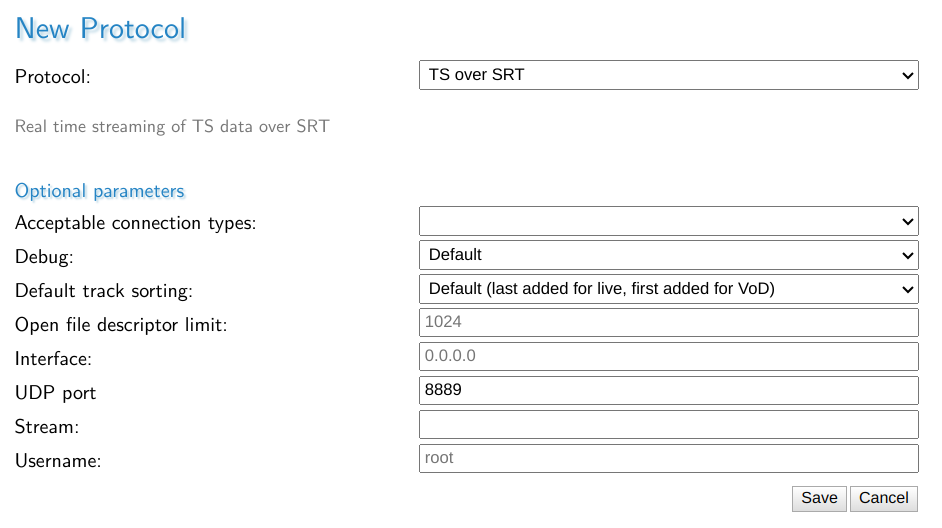

Setting up SRT Listener output through the protocol panel is done by selecting TS over SRT and setting up the UDP port to listen on.

You can set the Stream, which means that anyone connecting directly to the chosen SRT port will receive the stream matching this stream name within MistServer.

However not setting allows you to connect towards this port and set the ?streamid=stream_name to select any stream within MistServer.

To connect to the stream srt_input one could use the following srt address to connect to it:

srt://mistserveraddress:8889?streamid=srt_input

SRT CALLER OUTPUT

Setting up SRT caller output can only be done through the push panel. The only difference with a SRT listener output through the push panel is the mode selected.

Automatic push vs push

Within MistServer an automatic push will be started and restarted as long as the source of the push is active. This is often the behaviour you want when you send out a push towards a known location. Therefore we recommend using Automatic pushes.

Setting up SRT CALLER OUTPUT

srt://host:port

The above would start a push of the stream live towards address 123.456.789.123 using UDP port 9876. The connection will be successful if a SRT listening process is available there.

Using the SRT parameter fields here is the same as adding them as parameters.

4. How to use SRT over a single port

SRT can also be set up to work through a single port using the ?streamid parameter. Within the MistServer Protocol panel you can set up SRT (default 8889) to accept connections coming in, out or both.

If set to incoming connections, this port can only be used for SRT connections going into the server. If set to outgoing the port will only be available for SRT connections going out of the server. If set to both, SRT will try to listen first and if nothing happens in 3 seconds it will start trying to send out a connection when contact has been made. Do note that we have found this functionality to be buggy in some implementations of Unix (Ubuntu 18.04) or highly unstable connections.

Once set up you can use SRT in a similar fashion as RTMP or RTSP. You can pull any available stream within MistServer using SRT and push towards any stream that is setup to receive incoming pushes. It makes the overall usage of SRT a lot easier as you do not need to set up a port per stream.

Pushing towards SRT using a single port

Any stream within MistServer set up with a push:// source can be used as a target for SRT. What you need to do is push towards

srt://host:port?streamid=streamname

For example, if you have the stream live set up with a push:// source and your server is available on 123.456.789.123 with SRT available on port 8889 you can send a SRT CALLER output towards:

srt://123.456.789.123:8889?streamid=live

And MistServer will ingest it as the source for stream live.

Pulling SRT from MistServer using a single port

If the SRT protocol is set up you can also use the SRT port to pull streams from MistServer using SRT CALLER INPUT.

For example, if you have the stream vodstream set up and your server is available on 123.456.789.123 with SRT available on port 8889 you can have another application/player connect through SRT CALLER

srt://123.456.789.123:8889?streamid=vodstream

5. Known issues

The SRT library we use for the native implementation has one issue in some Linux distros. Our default usage for SRT is to accept both incoming and outgoing connections. Some Linux distro have a bug in the logic there and could get stuck on waiting for data while they should be pushing out when you’re trying to pull an SRT stream from the server.

If you notice this you can avoid the issue by setting a port for outgoing SRT connections and another port for incoming SRT connections. This setup will also win you ~3seconds of latency when used. The only difference is that the port changes depending on whether the stream data comes into the server or leaves the server.

6. Recommendations and best practices

One port for input, one for output

The most flexible method of working with SRT is using SRT over a single port. Truly using a single port brings some downsides in terms of latency and stability however.

Therefore we recommend setting up 2 ports, one for input and one for output and then using these together with the ?streamid parameter.

This has the benefit of making it easier to understand as well, one port handles anything going into the server, the other port handles everything going out of the server.

Getting SRT to work better

There are several parameters (options) you can add to any SRT url to configure the SRT connection. Anything using the SRT library should be able to handle these parameters. These are often overlooked and forgotten. Now understand that the default settings of any SRT connection cannot be optimized for your connection from the get go. The defaults will work under good network conditions, but are not meant to be used as is in unreliable connections.

If SRT does not provide good results through the defaults it’s time to make adjustments.

A full list of options you can use can be found in the SRT documentation.

Using these options is as simple as setting a parameter within the url, making them lowercase and stripping the SRTO_ part. For example SRTO_STREAMID becomes ?streamid= or &streamid= depending on if it’s the first or following parameter.

We highly recommend starting out with the parameters below as they are th emost likely candidates to provide better results.

Latency

latency=120ms

Default 120ms

This is what we consider the most important parameter to set for unstable connections. Simply put, it is the time SRT will wait for other packets coming in before sending it on. As you might understand if the connection is bad you will want to give the process some time. It’ll be unrealistic to just assume everything got sent over correctly at once as you wouldn’t be using SRT otherwise! Haivision themselves recommend setting this as:

RTT_Multiplier * RTT

RTT = Round Time Trip, basically the time it takes for the servers to reach each other back and forth. If you’re using ping or iperf remember you will need to double the ms you get.

RTT_Multiplier = A multiplier that indicates how often a packet can be sent again before SRT gives up on it. The values are between 3 and 20, where 3 means perfect connection and 20 means 100% packet loss.

Now what Haivision recommends is using their table depending on your network constraints. If you don’t feel like calculating the proper value you can always take a step appraoch and test the latency in 5 steps. Just start fine tuning once you reach a good enough result.

1: 4 x RTT

2: 8 x RTT

3: 12 x RTT

4: 16 x RTT

5: 20 x RTT

Keep in mind that setting the latency higher will always result in a loss of latency. The gain is stream quality however. The best result is always a balancing act of latency and quality.

Packetfilter

?packetfilter=fec-options

This option enables forward error correction, which in turn can help stream stability. A very good explanation on how to tackle this is available here. Important to note here is that it is recommended that one side has no settings and the other sets all of them. In order to do this the best you should have MistServer set no settings and any incoming push towards MistServer set the forward error correction filter.

While we barely have to use it. If we do we usually start out with the following:

?packetfilter=fec,cols:8,rows:4

We start with this and have not had to switch it yet if mixed together with a good latency filter. Now optimizing this is obviously the best choice, but it helps to have a starting point that works.

Passphrase

?passphrase=uniquepassphrase

Needs at least 10 characters as a passphrase

This option sets a passphrase on the end point. When a SRT connection is made it will need to match the passphrase on both sides or else the connection is terminated. While it is a good method to secure a stream, it is only viable for single port connections. If you were to use this option with the single port connection all streams through that port would use the same passphrase, making it quite un-usable. If you’d like to use a passphrase while using a single port we recommend reading the PUSH_REWRITE token support post.

If you want to use passphrase for your output we recommend setting up a listener push using the push panel style as explained in Chapter 3. Setting up SRT as a protocol would set the same passphrase for all connections using that port, which means both input and output.

Combining multiple parameters

To avoid confusion, these parameters work like any other parameters for urls. So the first one always starts with a ? while every other starts with an &.

Example:

srt://mistserveraddress:8890?streamid=streamname&latency=16000&packetfilter=fec,cols:4,rows:4

Conclusion

Hopefully this should’ve given you enough to get started with SRT on your own. Of course if there’s any questions left or you run into any issues feel free to contact us and we’ll happily help you!

[Blog] Migration instructions between 2.X and 3.X

With the release of 3.0, we are releasing a version that has gone through extensive rewrites of the internal buffering system.

Many internal workings have been changed and improved. As such, there was no way of keeping compatibility between running previous versions and the 3.0 release, making a rolling update without dropping connections not feasible.

In order to update MistServer to 3.0 properly, step one is to fully turn off your current version. After that, just run the installer of MistServer 3.0 or replace the binaries.

Process when running MistServer through binaries

- Shut down MistController

- Replace the MistServer binaries with the 3.0 binaries

- Start MistController

Process when running MistServer through the install script

Shut down MistController Systemd:

systemctl stop mistserver

Service:

service mistserver stop

Start MistServer install script:

curl -o - https://releases.mistserver.org/is/mistserver_64Vlatest.tar.gz 2>/dev/null | sh

Process for completely uninstall MistServer and installing MistServer 3.0

Run:

curl -o - https://releases.mistserver.org/uninstallscript.sh 2>/dev/null | sh

Then:

curl -o - https://releases.mistserver.org/is/mistserver_64Vlatest.tar.gz 2>/dev/null | sh

Enabling new features within MistServer

You can enable the new features within MistServer by going to the protocol panel and enabling them. Some other protocols will have gone out of date, like OGG (to be added later on), DASH (replaced by CMAF) and HSS (replaced by CMAF, as Microsoft no longer supports HSS and has moved to CMAF themselves as well). The missing protocols can be removed/deleted without worry. The new protocols can be added manually or automatically by pressing the “enable default protocols” button

Rolling back from 3.0 to 2.x

Downgrading MistServer from 3.0 to 2.x will also run into the same issue that it is unable to keep connections active, which means you will have to repeat the process listed above with the end binaries/install link being the 2.x version. If you deleted the old 2.X protocols during the 3.X upgrade, you will have to re-add them using the same “enable default protocols” method as well. It is safe to have both sets in your configuration simultaneously if you switch between versions a lot, or need a single config file that works on both.

[Blog] Why is all of MistServer open source?

Hey there! This is Jaron, the lead developer behind MistServer and one of its founding members. Today is a very special day: we released MistServer 3.0 under a new license (Public Domain instead of aGPLv3) and decided to include all the features previously exclusive to the "Pro" edition of MistServer as well. That means there is now only one remaining version of MistServer: the free and open source version.

You may be wondering why we decided to do this, so I figured I'd write a blog post about it.

First some history! The MistServer project first started almost fifteen years ago, with a gaming-focused live streaming project that was intended to be a rival to the service that would later become known as Twitch. At the time, we relied on third-party technology to make this happen, and internet streaming was still in its infancy in general. Needless to say, that project failed pretty badly.

During a post-mortem meeting for that failed project, the live streaming tech we relied on came up as one of the factors that caused the project to fail. In particular how this software acted like a black box, and made it very tricky to integrate something innovative with it. The question came up if we could have done better, ourselves. We figured we probably could, and decided to try it. After all, how hard could it be to write live streaming server software, right..?

What we thought would be a short and fun project, quickly turned into something much bigger. The further we got, the more we discovered that video tech was - back then especially - a very closed off industry that is hard to get into. As we worked on the software, we came up with the idea that we wanted to change this. Open it up to newcomers, like we ourselves had tried, and make it possible for anyone with a good idea to make a successful online media service. There were several popular free and open source web server software packages, like Apache and Nginx - but all the media server software was closed (and usually quite expensive, as well). We wanted to do the same thing for media server software: create something open, free, and easy to use for developers of all backgrounds to enable creativity to flourish.

However, we also had people working on this software full-time that most definitely needed to be paid for their efforts. So while the first version of MistServer was already partially open source, we made a few hard decisions: we kept the most valuable features closed source, and the parts that were open were licensed under the aGPLv3. That license is an "infectious" open source license: it requires anyone communicating over a network with the software to get the full source code of the whole project. That would make it almost impossible to use in a commercial environment - both because of the missing features as well as the aggressive license.

That allowed us to then sell custom licensing and support contracts, while staying true to the ideas behind open source. Our plan was to eventually - as we had built up enough customer base and could afford to make this decision - release the whole software package as open source and solely sell support and similar services contracts. As we were funded by income from license sales, our growth was fairly restricted and thus slow and organic. We built up a good reputation, but were nowhere near being able to proceed with the plan we made at the start.

Over the years, we slowly did release some of the closed parts as open, but we had to be careful not to "give away" too much. To non-commercial users, we made available a very cheap version of the license without support. Our license and support contracts over time evolved to be mostly about support, and licensing itself more of an excuse to start discussing support terms. From my own interactions with our customers it has become clear that they stay with us because of the support we offer, and consider that the most valuable part of their contracts with us. However, the constrained growth did mean we were not able to fully commit to a business model that did not involve selling licenses.

Until, last October, Livepeer came along. They have a similar goal and mindset as the MistServer team did and does, which meant they not only understood our long-term plan, but believed that with the increased funding flow they brought to us, it could now finally be executed!

So, it may seem like a sudden change of course for us to release the full software as open source today, but nothing could be further from the truth. It's something we've believed in and have been wanting to do right from the start of the project. Words are lacking to describe how it feels to finally be able to come full circle and complete a plan that has been so long in the making. It's an extremely exciting moment for us, and I speak for the whole team when I say we're looking forward to continuing to improve and share MistServer with the world.

MistServer

MistServer